Evidence and Innovation: the tried, tested and boring

Evidence and innovation – these are two words familiar to anyone reading about humanitarian programming. Nowadays everything has to be “evidence-based”, judged against “indicators”, monitored, measured and evaluated. And great weight is given to innovation, even to the extent that one of Oxfam’s measures of a family’s resilience is its ability to innovate. We have an NGO called Evidence Aid and the Humanitarian Evidence Programme as well as a fund for innovation – the Humanitarian Innovation Fund.

Instinctively, I distrust both words and not only because of a dislike of buzz-words that come and go. I suggest that there is a degree of mutual exclusivity: you cannot be evidence-based and innovative.

First evidence. Humanitarian responses are necessarily messy, unpredictable and of short duration. And each tragedy is unique. If the evidence we are looking for is a demonstration of the efficacy of an approach, then it can only be researched after that approach has been implemented. Against what control can this data be assessed? It cannot be judged against the situation before the disaster or conflict precisely because there has been a disaster and that changes everything. There are ethical considerations about comparing village A, where, let’s say, houses were built, against village B where there was no intervention. Any survey-monkey type questionnaire that probes beneficiary satisfaction has to be so superficial and simplistic as to be statistically meaningless. It might give a snap-shot, an idea of problems, but it can never constitute evidence in any meaningful way.

Even if there were a way to gather evidence in a statistically rigorous manner, it could not be transferred from one disaster to another as each is unique. Imagine transferring evidence from Haiti to the next major urban earthquake in say Tehran, Dacca or Guatemala City.

The drive for evidence often comes from donors and this can push operational agencies towards less than ideal proposals. Houses, bags of beans and latrines can all be counted. Even the number of participants on a training course can be counted. Teams of M&E staff (or MEL or even MEAL) count ad absurdum, disaggregating by age, sex, whatever. This only provides us with evidence that the money has been spent. It tells us nothing of the quality, impact or effectiveness. Moreover, important but less tangible approaches, that may not be conducive to counting, tend to be over-looked.

None of this is an argument against good information management and learning. Some things can be easily counted while others resist being measured and probably should not be. The impact of housing on livelihood recovery, as an example, is very important but I defy it to be measured. The number of houses can be counted as can the number of sewing-machines distributed or fishing boats repaired; but the effect of a shelter programme on economic recovery can only be surmised. We should count and measure what we can and learn from successes and failures; but it is a mistake to claim it as evidence.

Learning from practice is essential of course. Indeed it is vitally important that we have the means to make good, informed decisions that translate into effective practice. However, to repeat, the humanitarian world is messy and unpredictable and we have to rely on common sense, wisdom, experience, local knowledge and tried and tested tools. We should not confuse information (or worse, opinion) with evidence.

Returning years later to evaluate the effect of a shelter programme is probably the best way to gather information that can be useful in informing future practice. Even then gathering evidence can be elusive: 90% occupancy is not necessarily evidence of success (it may be evidence of no alternative option); but 20% occupancy is surely evidence of failure. However these studies are rare and inevitably context specific. On the other hand, final and mid-term post-disaster evaluations are commonplace requirements written into most shelter projects. Of course they can be informative, but they will rarely provide hard evidence of the success or failure of the project. Once again, the best we can hope for is a picture, a snap-shot, the anecdotal testimony of stakeholders – and this can be very informative and useful, but it hardly provides the kind of evidence that would be considered statistically relevant and reliable.

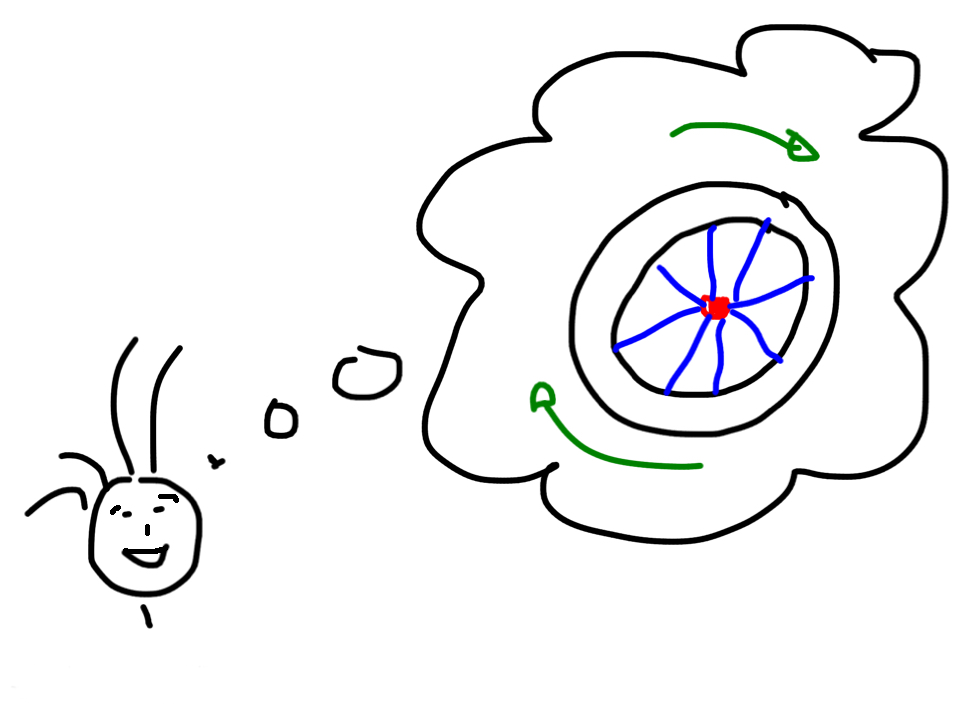

And now for innovation. Within the humanitarian sector the definition of innovation has been so manipulated that it bears little relationship to its normal English usage. (The Oxfam working paper referred to in the first paragraph describes “innovation potential” as the “ability to take appropriate risks and positively adjust to change”). Nonetheless it is a word in common use and most of us understand it to mean new, something that has not yet been tried and tested. It follows that there can be no evidence of its impact in practice over the long term. Innovation and evidence are unnatural bed-fellows. If there is evidence that it works, then it is clearly not an innovation. Even the HIF’s vision statement – “A humanitarian system that is capable of innovating and adapting …” – acknowledges a certain internal contradiction.

But why, anyway, this infatuation with innovation? Where is the evidence (?) that innovation delivers much in the way of results? Of course there are exceptions, but the fact that they are exceptions proves the rule: Plumpynuts possibly; cash transfer programming maybe. In the latter case it is the technology (mobile phones etc – someone else’s innovation) that is the innovative part, while cash transfer as a method of aid delivery has been around a long time. The fact that something new is tried out (perhaps rental subsidy as an example) might qualify it for the label of “innovative” but that, in itself, does not give it any special status. There is nothing intrinsically good in the search for the innovative or the new. We are grateful for Velcro, cable-ties and antibiotics, but they all remained valuable long after they their novelty value had worn off.

Most of us try to learn from and build upon the experience of our mentors and peers. There are thousands of artists for every Michelangelo; thousands of engineers for every Brunel.

Equally importantly, should we even be considering innovation as part of humanitarian work? Is it appropriate to be using other people’s misfortune as a playground for testing our new ideas? Perhaps it sometimes has a place in a development setting but in the pain and rush of a crisis staying with what we know works well seems to make sense. We, in the shelter sector, rail against the constant bombardment of (often wacky) new ideas and would love to see a consolidation of tried and tested practice, so often neglected. Of course change happens, but good change is incremental: the opposite of innovation.

This would never be the subject of a TED talk. Not only is it dull, but it is in praise of the dull. If the tried, tested and boring is what best serves the affected population then we should stick with it, improving it gently as time goes by. It is where we are most likely to find reliable evidence and least likely to find innovation.

Bill Flinn is an independent consultant, architect, builder and teacher.

Bill lectures at the Centre for Development and Emergency Practice, Oxford Brookes University, UK.